Bluehost Self-Managed VPS: How to Install Ollama

Ollama is a tool that lets you run large language models (LLMs) locally on your own computer, instead of relying on cloud-based AI services. Ollama makes it easy to download, run, and interact with AI models, such as chatbots, entirely offline.

How Ollama works

- Runs AI models locally (Mac, Linux, Windows support)

- No internet required after model download

- Simple command-line interface

- Privacy-friendly (your data stays on your machine)

- Optimized for consumer hardware

What models can Ollama run?

Ollama supports many popular open-source LLMs, for example:

- Llama 2 / Llama 3

- Mistral

- Gemma

- Phi

- Code-focused models (for programming help)

Install Ollama from the Bluehost Portal

- Log in to your Bluehost Portal.

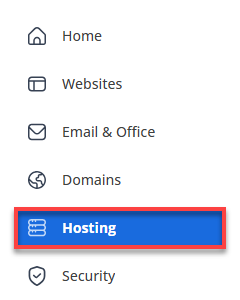

- Click Hosting in the left-hand menu.

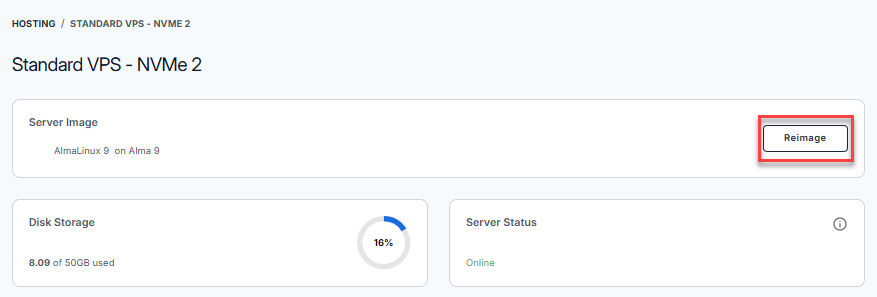

- Click the MANAGE button on the Self-Managed VPS package.

- Navigate to the Server Image section, then click the Reimage button.

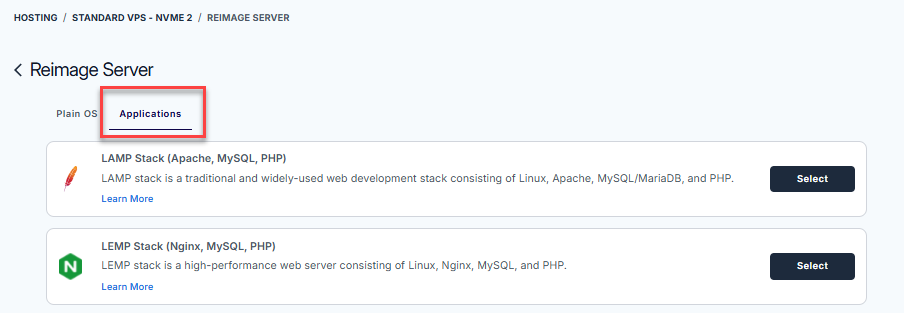

- In the Reimage server page, select Applications.

- From the list of available applications, find Ollama and click Select.

- On the pop-up message, type reimage in the field, then click Proceed.

- Wait for a few seconds to complete the installation.

Summary

Ollama becomes even more powerful when paired with a Bluehost Self‑Managed VPS. Running models on a VPS gives you dedicated resources, full control over configuration, and the flexibility to scale beyond a local machine—while still keeping your AI environment private. With Ollama deployed on a self‑managed VPS, teams can experiment, prototype, or support internal tools with consistent performance and greater reliability. Together, Ollama and a Bluehost VPS create a practical path to owning your AI stack—secure, customizable, and built on infrastructure you control.