Most companies trying to “rank in ChatGPT” are solving the wrong problem.

They assume visibility in AI answers works like traditional search, so they invest in SEO: more content, tighter keyword targeting, stronger backlinks.

But generative AI systems don’t rank pages the way Google does. They synthesize answers. And when a system is generating a response instead of listing links, the decision framework changes.

AI doesn’t reward optimization alone. It rewards certainty.

If ChatGPT, Gemini or Perplexity isn’t mentioning your brand, it’s rarely because you’re too small or too new. In most cases, it’s because the system lacks enough structured, consistent and externally validated signals to confidently include you.

And in generative search, confidence isn’t optional. It’s the filter.

AI doesn’t rank. It decides what’s safe to say.

Generative AI systems operate differently. They don’t return a list of links; they construct answers. And when a system is composing a response instead of ordering results, every brand it includes becomes an editorial choice.

That inclusion isn’t accidental.

As explored in the analysis on why AI isn’t killing search, the shift is not about replacing search engines. It is about changing how answers are assembled and which entities are considered safe to reference.

Behind the scenes, the model is effectively evaluating a single question: Is this entity clear, validated and consistent enough to include without introducing risk?

Because once an AI system names a company inside a generated answer, it isn’t just ranking the company; it’s endorsing it.

That shift changes everything. Visibility in AI systems is fundamentally not an SEO problem. It’s a verifiability problem.

Why new brands struggle (And why it’s not about age)

Many founders assume they need years of backlinks and domain authority before AI tools surface them.

But recent industry experiments tracking brand-new companies from zero digital footprint show something different.

When brand mentions and entity signals are clearly structured and externally validated, AI mentions can begin within weeks, not years.

The difference wasn’t the linking volume. It was signal clarity.

Older companies often win by default because they’ve accumulated consistent mentions across the web.

New companies lose because their signals are fragmented.

The 3 layers that make a brand verifiable

If you strip away the SEO noise, AI inclusion usually depends on three structural layers.

1. Entity clarity: Are you machine-readable as a “thing”?

AI systems rely on clean identity signals. They need to understand, without ambiguity, who you are, what category you belong to and how you describe yourself across the web.

In practice, that means:

- Consistent branding, without spelling variations

- One clearly defined category description

- One primary positioning statement repeated across platforms

- Aligned metadata and About-page language

When your LinkedIn bio says one thing, your homepage frames you differently and third-party directories categorize you inconsistently; you introduce signal conflict.

And in AI systems, conflicting signals don’t create nuances.

They create doubts.

Ambiguity isn’t neutral.

It’s disqualifying.

2. Retrieval structure: Is your content easy to extract?

Long-form guides aren’t inherently valuable to AI systems. What matters is extractability.

Generative models don’t reward length; they reward clarity.

The content that gets cited most often tends to share a common structure: it presents the conclusion early, uses clean subheadings, defines terms explicitly and avoids unnecessary narrative padding. The language is direct enough to be quoted without modification.

In other words, it is easy to lift.

When your strongest explanation is buried 1,200 words deep inside layered storytelling, there’s a good chance the model never isolates it as a stable answer unit. What feels comprehensive to a human reader can feel diffuse to a retrieval system.

AI systems prioritize clarity over cleverness.

The sharper and more self-contained your insights are, the more likely they are to surface.

3. External validation: Does anyone else corroborate you?

This is where most brands stall.

AI systems don’t rely solely on what you say about yourself. Self-published claims are signals, but they’re incomplete ones. Generative models look for external confirmation before confidently including a company inside an answer.

They scan for corroboration across the broader web ecosystem, including:

- Industry-specific directories

- Credible review platforms

- Niche publications covering your category

- Independent third-party mentions

- High-authority platforms such as LinkedIn, Medium or established trade sites

This doesn’t mean you need national press coverage or a feature in a major media outlet.

You need alignment.

When multiple independent sources describe your company in consistent terms, you reduce uncertainty. And reducing uncertainty is what increases inclusion probability.

If your own website is the only place confirming your positioning, your existence or your expertise, the system has little reason to treat you as a stable entity.

From an AI perspective, that makes you high risk to include.

Corroboration isn’t a branding luxury.

It’s a structural requirement.

Why “SEO-first” thinking backfires in AI visibility

An SEO-first strategy isn’t wrong. It’s just incomplete.

Traditional SEO prioritizes measurable performance indicators such as keyword targeting, traffic acquisition, ranking positions and backlink velocity. The objective is visibility within a results page.

AI visibility operates under different logic.

Generative systems prioritize entity consistency, fact stability, cross-source agreement and clear attribution. Instead of asking, “How relevant is this page for a query?” they are effectively asking, “How reliable is this entity within a synthesized answer?”

There is overlap between the two disciplines; strong SEO can support AI inclusion. But they are not the same game and optimizing for one does not guarantee success in the other.

It’s entirely possible to rank #2 in Google for a high-intent keyword and still never be mentioned in an AI-generated response.

Because AI systems are not ranking your page.

They are evaluating whether your brand is structurally stable, externally validated and consistent enough to insert into a composed answer without introducing risk.

That distinction is subtle.

But it changes the entire strategy.

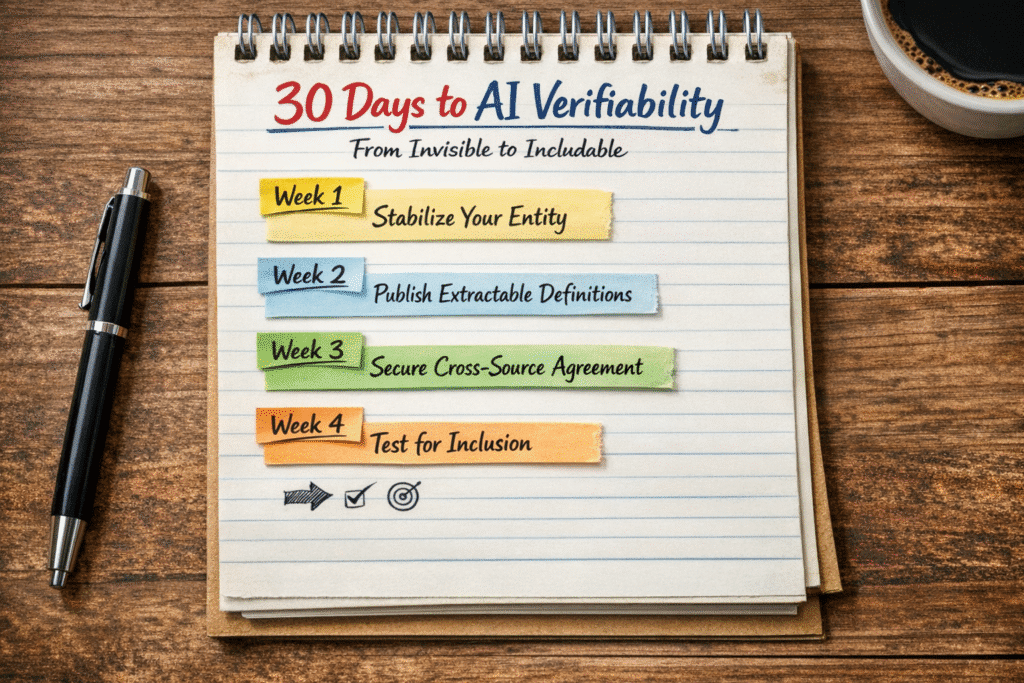

The 30-day shift: From invisible to verifiable

If AI visibility is about certainty, the first month isn’t about scale. It’s about structural stability. Here’s how the progression should unfold.

Week 1: Stabilize your entity

Before chasing visibility, eliminate ambiguity.

Lock in one clear category definition. Align your homepage, LinkedIn, directories and bios around the same positioning language. Clean up naming inconsistencies, taglines and technical metadata.

By the end of this week, your company should look like a single, coherent entity everywhere it appears; not a brand experimenting with its identity in public.

Clarity comes before content.

Week 2: Publish extractable definitions

Now give AI systems something structured to retrieve.

Create a tight category explainer and a buyer-focused education piece. Define your space clearly. Distinguish it from adjacent categories. Articulate evaluation criteria.

Don’t write for traffic. Write for extraction.

If a paragraph can’t stand alone as a clear answer, it’s not structured enough.

This shift is not theoretical. Distribution systems are already separating into distinct environments with their own signals and evaluation logic. The February 2026 Google Discover core update made that explicit.

Discover now operates independently from traditional search, prioritizing context, perceived value and user interest over query matching alone.

Week 3: Secure corroboration

Internal clarity is necessary, but not sufficient.

AI systems increase confidence when independent sources describe you consistently. This week is about cross-source alignment; relevant directories, a native authority post on a trusted platform and at least one third-party mention.

You’re reducing perceived risk.

The goal isn’t backlinks. It’s agreement.

Week 4: Benchmark inclusion

Now test inclusion, not rankings.

Analyze whether you appear, how your brand is framed, and whether competitor positioning reflects broader web signals.

Each result reveals a signal gap; either in clarity, structure or validation.

Fix the signals. Retest.

By Day 30, you may not dominate AI answers, but you’ve crossed the real threshold: you’ve become includable. And in generative search, inclusion is the new ranking.

Inclusion is the new ranking

For twenty years, digital strategy was simple: rank higher, get seen, win the click.

Generative AI breaks that model. There is no results page to climb. No list to compete within. There is only the answer and the handful of companies deemed safe enough to be included inside it.

When an AI system names your brand, you’re not ranking. You’re endorsed. And endorsement follows stricter rules than visibility ever did.

In traditional search, you could compensate for weak positioning with force. Publish more. Build more links. Target more keywords. Even if your identity was fuzzy, you could still fight your way onto page one.

In generative search, fuzziness isn’t penalized. It’s filtered out.

If your brand is inconsistent, poorly defined or weakly corroborated, you don’t slip from #3 to #9. You vanish.

That’s the real shift.

AI doesn’t reward whoever shouts the loudest. It includes whoever feels the safest to cite. Which means the competitive advantage is no longer volume. It’s structural trust.

The brands that dominate AI answers won’t necessarily produce the most content. They’ll be the ones that are unmistakably clear, consistently described across the web and repeatedly validated by independent sources.

They’ll be easy to define.

Easy to verify.

Easy to trust.

SEO optimized for visibility.

Verifiability optimizes for endorsement.

And in AI search, endorsement is the only visibility that matters.

Write A Comment