Key highlights

- Learn how to optimize vCPU and RAM allocation for memory-intensive AI agents.

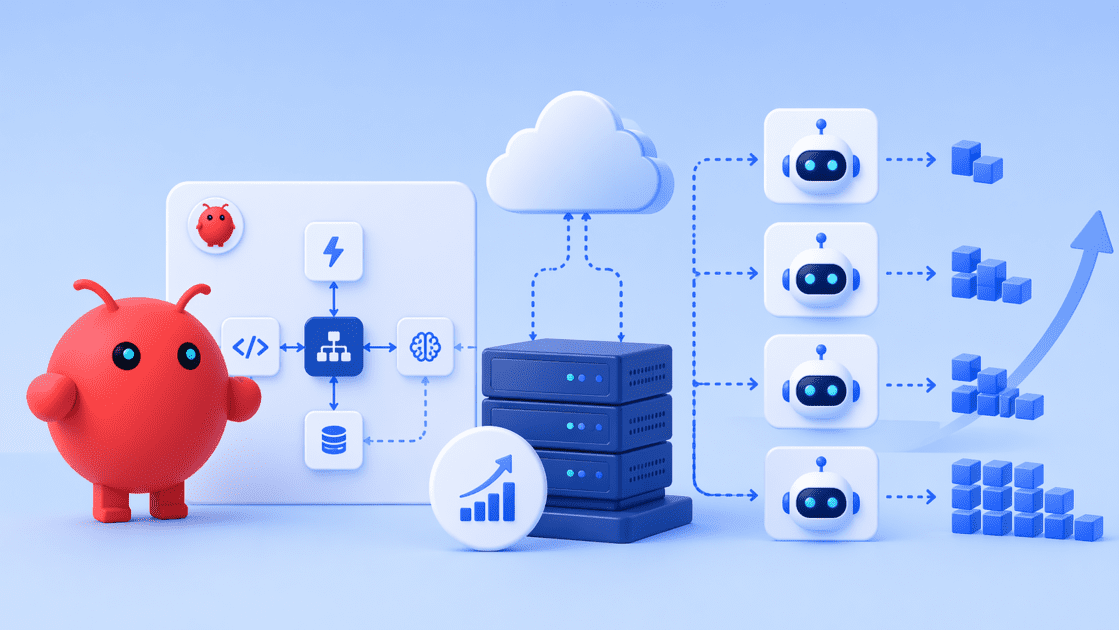

- Discover the architectural advantages of self-hosting OpenClaw on a dedicated VPS.

- Explore how combining n8n with OpenClaw creates a robust, owned automation stack.

- Compare VPS resource tiers to find the perfect configuration for your specific AI workload.

- Understand how to eliminate SaaS dependency and retain full control over your proprietary data.

As workloads grow, simple setups can no longer handle concurrency, memory and integration demands. A small team builds an OpenClaw workflow that works perfectly in testing. But once deployed, it starts failing under real usage. API delays increase, workflows slow down and agents begin to lose context. This is a common challenge when scaling OpenClaw workflows for production AI agents.

Scaling OpenClaw workflows requires more than just better code. It requires the right infrastructure, workflow architecture and automation strategy. In this guide, you will learn how to scale OpenClaw workflows efficiently and build reliable AI systems that perform consistently in production environments.

What does it mean to scale OpenClaw workflows?

Scaling OpenClaw workflows means ensuring your AI agents can handle more users, tasks and integrations without slowing down or failing. In early stages, workflows run as simple, single-agent processes. These work in testing but break under real usage due to increased API calls, concurrency and memory demands.

To scale OpenClaw workflows for production AI agents, you need to focus on three areas:

- Compute scalability to support multiple agents running in parallel

- Workflow orchestration to manage multi-step execution without bottlenecks

- State and memory management to maintain context across interactions

Scaling is not just about adding resources. It is about designing systems that remain reliable as complexity grows.

How do you scale OpenClaw workflows effectively?

Scaling OpenClaw workflows is not just about adding more compute resources. It requires designing systems that can handle concurrency, manage dependencies and execute tasks reliably under real-world conditions.

As workflows grow, complexity increases across API calls, decision logic and parallel execution. Without a structured approach, this leads to bottlenecks, delays and inconsistent outputs. To scale effectively, you need to focus on how your workflows are designed and executed.

1. Separate AI reasoning from execution

OpenClaw is built for reasoning and decision making, but not every task requires AI processing. Many workflows include repetitive actions like API calls, data transfers or conditional routing.

Separating these responsibilities helps reduce unnecessary load on AI agents. OpenClaw can focus on complex decision-making, while automation tools handle routine execution. This improves performance and allows your system to scale more efficiently.

2. Design modular workflows

Rigid workflows become difficult to manage as they grow. Breaking workflows into smaller, modular components makes them easier to scale and maintain.

Each module can handle a specific function such as input processing, decision logic or execution. This structure allows you to optimize or debug individual parts without affecting the entire system, reducing the risk of large-scale failures.

3. Enable asynchronous execution

Sequential workflows can create bottlenecks when tasks depend on each other. Asynchronous execution allows multiple tasks to run in parallel without blocking the system.

This is especially useful for workflows involving API calls or external services. By reducing wait times between steps, asynchronous execution improves throughput and overall system performance.

4. Optimize API interactions

OpenClaw workflows depend heavily on APIs, which can become a bottleneck at scale. Poorly managed API calls can lead to rate limits, delays and failures. To scale effectively, batch requests where possible, implement retry logic and handle failures gracefully. This helps workflows continue running even when external services are slow or unreliable.

5. Manage state and memory efficiently

As workflows become more complex, maintaining context across multiple steps becomes critical. Agents need to retain relevant information to produce accurate outputs. Efficient state management ensures workflows can handle long-running processes without losing context. This is essential for multi-step reasoning and consistent execution.

For example, in high-concurrency workflows, running multiple agents without separating execution layers can lead to API delays and increased failure rates. Splitting reasoning and execution helps reduce this load. Scaling OpenClaw workflows requires both infrastructure and thoughtful design. When you combine modular architecture, asynchronous execution and efficient resource usage, your system becomes more reliable and easier to scale.

Now that you understand how to scale OpenClaw workflows at the system level, let’s explore what infrastructure is required to scale workflows.

What infrastructure is required to scale OpenClaw workflows?

To scale OpenClaw workflows for production AI agents, you need infrastructure that can handle high concurrency, persistent memory and continuous execution. As workflows grow, the system must support higher compute demand, faster data processing and reliable integrations.

Basic environments may work for testing, but production setups require stability and control. Below are the key infrastructure components needed to scale OpenClaw workflows effectively.

1. Dedicated compute resources

OpenClaw workflows involve multi-step reasoning, API calls and parallel execution. These operations require consistent CPU and RAM availability. Dedicated resources ensure agents can run without delays caused by resource contention, especially in high-concurrency environments.

2. High-speed storage

Scaling OpenClaw workflows requires efficient handling of memory, logs and intermediate data. High-speed storage such as NVMe SSDs enables faster data access and improves state persistence. This is critical for workflows that depend on retrieving context across multiple steps. Faster storage reduces latency and helps maintain consistent performance across long-running processes.

3. Server-level access

Production workflows often require custom configurations and integrations. Server-level access allows you to install dependencies, manage system settings and integrate OpenClaw with APIs, databases and automation tools.

4. Scalable infrastructure

As workloads grow, your infrastructure must scale with them. The ability to upgrade CPU, RAM and storage without disruption helps consistent performance as more agents and workflows are added.

5. Reliable networking for API-heavy workflows

OpenClaw workflows depend heavily on APIs and internal communication. Reliable networking helps low latency, stable connections and consistent data transfer, all of which directly impact execution speed and success rates.

A production-ready OpenClaw setup requires infrastructure that balances performance, flexibility and scalability. Now that you understand the infrastructure requirements, let’s learn how to scale OpenClaw workflow effectively.

Also read: VPS Requirements for OpenClaw: Sizing Your AI Infrastructure

Why is VPS hosting essential for scaling OpenClaw?

VPS hosting is essential for scaling OpenClaw workflows because it provides a controlled environment where AI agents can run reliably under increasing workload and complexity. As workflows move from experimentation to production, VPS ensures consistent performance, flexibility and system stability. Here’s how VPS directly supports scaling OpenClaw workflows:

1. Enables parallel agent execution

Scaling OpenClaw workflows often involves running multiple AI agents at the same time. Each agent processes inputs, executes logic and interacts with APIs concurrently. A VPS environment provides dedicated resources that allow these agents to run in parallel without interfering with each other. This ensures smooth execution even in high-concurrency scenarios.

2. Prevents performance bottlenecks

Shared environments can introduce unpredictable slowdowns when resources are distributed across multiple users. This becomes a major issue as workflows scale. With VPS hosting, dedicated CPU and RAM eliminate resource contention. This helps maintain consistent execution speed and prevents workflow delays during peak loads.

3. Supports long-running and persistent workflows

OpenClaw workflows often run continuously and depend on maintaining context across multiple steps. These long-running processes require a stable environment to avoid interruptions. VPS infrastructure ensures uptime and persistence, allowing workflows to execute reliably without unexpected resets or failures.

4. Allows dynamic resource scaling

As OpenClaw workflows grow in complexity, resource requirements increase. This includes higher CPU usage, more memory and additional storage for logs and state management. VPS hosting allows teams to scale resources without redesigning their system. This flexibility supports gradual growth while maintaining performance.

5. Enables full system customization

Production AI workflows require custom environments, dependencies and integrations. These cannot be managed effectively in restricted hosting environments. With VPS, developers get full control over the server. This allows them to configure workflows, install tools and optimize the system based on their specific use cases.

6. Supports complex integrations and automation

OpenClaw workflows depend on multiple external systems such as APIs, databases and automation tools. Managing these integrations requires flexibility and control. VPS environments support seamless integration across systems, making it easier to build connected workflows that operate reliably at scale.

Recommended VPS configurations for OpenClaw workloads

| Feature | Entry-level workloads | Advanced workloads |

| Best for | Prototyping, single-agent workflows, low concurrency | Multi-agent systems, high concurrency, complex reasoning |

| RAM | 2 GB or 4 GB | 8 GB or 16 GB |

| Storage | 50 GB or 100 GB NVMe | 200 GB or 450 GB NVMe |

| vCPU cores | 1 to 2 cores | 4 to 8 cores |

| Performance | Basic automation and testing | Parallel execution and production workloads |

Also read: How to Run OpenClaw 24/7 on a VPS

How does Bluehost VPS supports OpenClaw scaling?

Bluehost VPS hosting helps teams build and scale their self-hosted AI systems specifically for teams building and scaling self-hosted AI systems. Our infrastructure supports OpenClaw deployments with dedicated resources, flexible configuration and simplified setup, helping teams move from experimentation to production faster.

Here’s how our platform enables OpenClaw workflow scaling:

1. One-click OpenClaw deployment for faster setup

Deploying OpenClaw manually can be time-consuming and error-prone. With Bluehost VPS, we provide one-click OpenClaw deployment, allowing you to launch a self-hosted AI environment in minutes. This removes setup complexity and helps teams start building AI workflows immediately.

2. Dedicated infrastructure for reliable AI execution

Scaling OpenClaw workflows requires consistent compute performance. Our VPS platform provides dedicated CPU, RAM and storage resources, ensuring AI agents can run concurrently without performance fluctuations. This supports high-concurrency workflows and continuous automation.

3. Supports full AI orchestration and workflow pipelines

OpenClaw enables AI orchestration through structured workflows, prompt pipelines and multi-step reasoning. Our VPS environment supports these capabilities by allowing seamless integration with APIs, internal systems and automation tools. This makes it easier to build scalable AI workflows that operate reliably across systems.

4. Enables owned automation stack with n8n integration

With Bluehost VPS, teams can deploy both OpenClaw and n8n on the same infrastructure. This creates a fully owned automation stack where:

- OpenClaw handles AI reasoning and agent execution

- n8n manages event-driven workflows and integrations

This separation improves performance, reduces bottlenecks and enables scalable automation systems.

5. Scalable infrastructure for evolving AI workloads

As your OpenClaw workflows grow, your infrastructure must scale with them. Our VPS platform allows you to upgrade CPU, RAM and storage as needed, ensuring your system can handle increasing complexity, concurrency and workload demands.

Bluehost also supports fast deployment and flexible configurations, helping teams build and scale private AI systems efficiently. While infrastructure provides the foundation, scaling OpenClaw workflows also depends on how you design your system architecture.

Also read: How to Host OpenClaw for API Integrations: A Practical Engineering Guide

Final thoughts

Scaling OpenClaw workflows is not just a matter of adding more resources. It involves designing systems that can handle concurrency, manage memory and execute workflows reliably in production environments. As your AI agents grow in complexity, infrastructure, architecture and workflow design must work together to maintain performance.

At Bluehost, we provide VPS infrastructure that supports these requirements with dedicated resources, scalable configurations and full server control. This allows teams to build and run private AI systems without external limitations. If you are ready to move from experimentation to production, the right foundation matters. Deploy OpenClaw on Bluehost VPS and start building scalable AI systems today.

How this guide was created

This guide is based on common scaling patterns observed in self-hosted AI workflows and automation systems. It reflects practical approaches used in VPS-based environments for handling concurrency, API-heavy workloads, and multi-step execution. Results may vary depending on workload complexity, API dependencies and infrastructure configuration.

Sources and references

- OpenClaw official documentation (installation and runtime requirements)

- n8n documentation (workflow automation and orchestration)

- Docker documentation (container setup and requirements)

- OWASP guidelines (secrets management and application security)

- Bluehost VPS product documentation (features and capabilities)

Frequently asked questions

No, OpenClaw generally cannot run on shared hosting. It requires server-level access and container support such as Docker, which shared hosting environments typically do not provide. Specific requirements may vary based on your setup, so it is best to verify with official documentation.

Self-hosting OpenClaw provides greater control over infrastructure and data, but it also introduces responsibility for security, maintenance and updates.

At Bluehost, upgrading your server capacity is a completely seamless process. You can easily add more vCPU, RAM or NVMe storage directly from your hosting dashboard without migrating servers.

The n8n platform excels at rule-based, event-driven API integrations. OpenClaw is specifically designed for autonomous AI agents and complex multi-step reasoning. They work best when deployed together on the same server.

Bluehost provides 24/7 infrastructure support to ensure your physical server remains online. However, managing the OpenClaw software and debugging custom agents requires your own technical expertise.

Write A Comment